How to Read Better Your A/B Testing Results with User Feedback

A/B testing is a smart strategy to experiment with fast different copy, design, or process options, and decide what works best. According to Optimizely, “A/B testing (also known as split testing or bucket testing) is a method of comparing two versions of a webpage or app against each other to determine which one performs better. A/B testing is essentially an experiment where two or more variants of a page are shown to users at random, and statistical analysis is used to determine which variation performs better for a given conversion goal.”But you can also use A/B testing for your product and your new features. It’s not limited to marketing assets or conversion exclusively—it’s also a great way to make better decisions faster. But A/B testing isn’t flawless—it only provides you with quantitative results and won’t help you understand why exactly a version of the tested feature or tweak worked better than the other.

The “why” of A/B testing

According to VWO, “A/B test hypotheses gathered from users can indirectly solve one of the issues often covered when discussing A/B tests.” However, as the same article indicates, according to the study conducted by Ron Kohavi and Roger Longbotham, A/B testing comes with a limitation because the exercise provides quantitative metrics without explanation.The VWO post argues, “Yes, usually you can verify only which variant is more successful, but you do not understand why. Knowing why the basis for future improvements in order can be to ensure the coherence of your designs.”In other words, you can run A/B tests and optimize different conversion- or product-related aspects. But you need qualitative data to understand why people behaved a certain way. Otherwise, you’ll see the results of your A/B tests without being able to understand the reasons behind certain behaviors. And although you’ll need to walk an extra mile to collect qualitative data from your users, you’ll have a deeper understanding of your customers or leads.

Develop a holistic approach to A/B testing

HubSpot notes, “You might find, for example, that a lot of people clicked on a call-to-action leading them to an ebook, but once they saw the price, they didn't convert. That kind of information will give you a lot of insight into why your users are behaving in certain ways.”Or, if we’re talking about a product, you may add a useful feature to your platform, but discover that your customers won’t use it because the microcopy is confusing. When running an A/B test, you may find the option that provides you with the best results. Yet you’ll always have to guess why people act a certain way. So why not develop a holistic approach to A/B testing to collect qualitative feedback from your real users? Wondering how you can do that? We’ve put together a quick list of practices for collecting user feedback:

Run an in-app survey

One of the best ways to gather user feedback is to set up a quick in-app survey. This option can be triggered by a specific action your user took. Whether it’s clicking on a CTA or launching a new feature, you can make that survey pop up and nudge your customer or trial user to answer a quick question related to the A/B testing you’re doing. Remember, though, that your in-app survey should be easy to complete. You don’t want people to ignore it because it has too many questions. One or two questions are enough. You want to be succinct and to the point so that you don’t alter people’s experience using your platform and get an answer from them.

Launch an exit survey

The same HubSpot article mentioned above notes, “One of the best ways to ask people for their opinions is through a survey or poll. You might add an exit survey on your site that asks visitors why they didn't click on a certain CTA or one on your thank-you pages that asks visitors why they clicked a button or filled out a form.”Note that you shouldn’t include this exit survey to all of your pages, but only to the ones you’re testing. For example, this can be the plan upgrade page. You can set up an exit survey to launch the moment your user leaves that page without taking any action. It can be an easy survey, based on one question and a few reply versions.Remember that you want to make it as easy as possible for your users to leave their feedback. And using answer options is always better than having people fill out an answer field. If your paying customer didn’t choose a higher-priced plan and just left the page, you can have the exit survey launch.

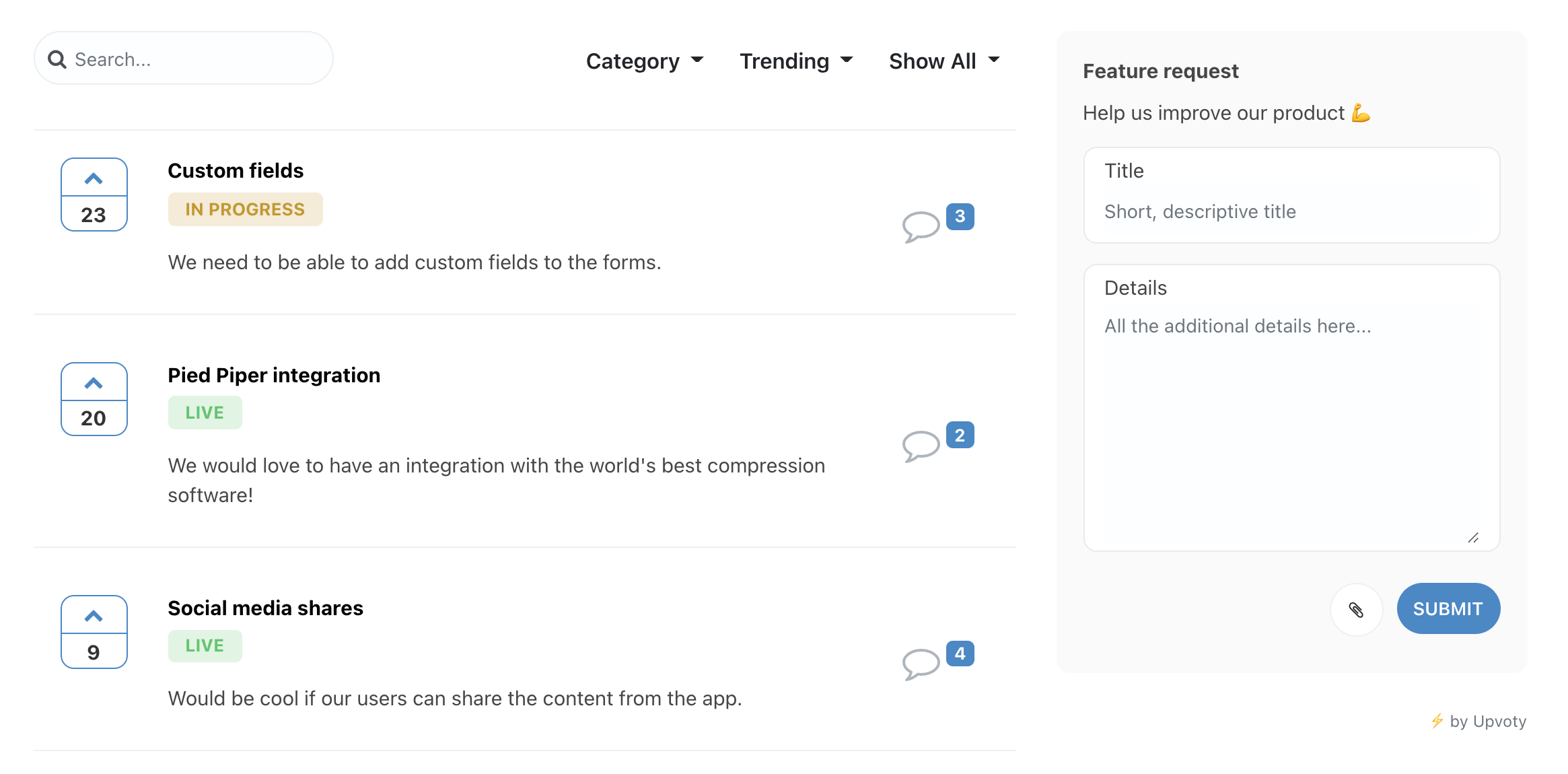

Create a feedback board for your A/B test

A feedback board is where you can manage all of your customer feedback in one place. You can have different feedback boards, such as Bug Fixes, New Feature Requests, Improvements, etc. Your users can choose the board that fits their needs and leave their feedback there. So take advantage of this feature, offered by Upvoty, and create an A/B testing board. Let’s say that you’ve launched a new feature and you’re testing a few details related to its usability. You can create a feedback board and have people submit their thoughts, suggestions, and comments.

A feedback board is where you can manage all of your customer feedback in one place. You can have different feedback boards, such as Bug Fixes, New Feature Requests, Improvements, etc. Your users can choose the board that fits their needs and leave their feedback there. So take advantage of this feature, offered by Upvoty, and create an A/B testing board. Let’s say that you’ve launched a new feature and you’re testing a few details related to its usability. You can create a feedback board and have people submit their thoughts, suggestions, and comments.

Install a feedback box

A feedback box is a simple CTA at the top of a page of your website or application that may say “Give us feedback about this page” or “Give us feedback about this feature.” You user can click on the box and enter his or her comments about that page or feature. This option is ideal if you’re A/B testing a specific page and you want to get qualitative feedback from your users.

Reach out to your users directly

Finally, Upvoty strongly believes in building strong relationships with users and customers. As we’ve mentioned in a previous article, “One of the most undervalued methods of getting feedback: talking directly to your customers. We can use all the tools we like, but talking directly to a person is, and will stay, the best way of getting feedback. Each time you have a call, a chat, or other forms of contact, just simply ask them how they think you’re doing, how your product is doing, and what they’re missing. Forming a relationship will reduce churn because you’re building together. You’re bonding.”

It would be great if you could suggest this on our new user feedback platform on Upvoty! https://t.co/wbhDqloym2

— ProWritingAid (@ProWritingAid) January 18, 2021

There’s nothing more powerful than having direct communication with the people using your platform. So if you want to get more qualitative data about A/B testing you run, simply reach out to your users and ask them a few questions.

Recap

A/B testing is great. Yet, without additional action, you won’t be able to understand the reasons behind certain user behaviour. To unravel the reasons why someone acted a certain way while being on your website or using your platform, you need to gather qualitative data via user feedback. To do that, you have multiple options:

- Run an in-app survey

- Set up an exit survey

- Create a feedback board specifically for your A/B test (with Upvoty)

- Install a feedback box

- Reach out to your users directly.

All these practices will help you collect qualitative data and have greater insights into your A/B testing strategy.